Evaluation of CNN-based automatic music tagging models

Our new paper for SMC 2020 led by Minz Won compares different state-of-the-art CNN music auto-tagging models in a reference evaluation on three datasets, and the great thing is all the models are available in PyTorch!

https://github.com/minzwon/sota-music-tagging-models

Evaluation of CNN-based automatic music tagging models. Won, M., Ferraro A., Bogdanov D., & Serra X. In 17th Sound and Music Computing Conference (SMC2020), 2020.

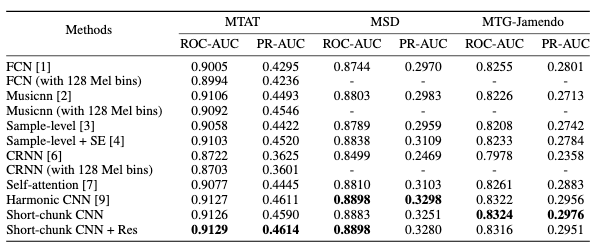

Recent advances in deep learning accelerated the development of content-based automatic music tagging systems. Music information retrieval (MIR) researchers proposed various architecture designs, mainly based on convolutional neural networks (CNNs), that achieve state-of-the-art results in this multi-label binary classification task. However, due to the differences in experimental setups followed by researchers, such as using different dataset splits and soft-ware versions for evaluation, it is difficult to compare the proposed architectures directly with each other. To facilitate further research, in this paper we conduct a consistent evaluation of different music tagging models on three datasets (MagnaTagATune, Million Song Dataset, and MTG-Jamendo) and provide reference results using common evaluation metrics (ROC-AUC and PR-AUC). Furthermore, all the models are evaluated with perturbed inputs to investigate the generalization capabilities concerning time stretch, pitch shift, dynamic range compression, and addition of white noise. For reproducibility, we provide the PyTorchimplementations with the pre-trained models.